Amazon re:Invent continues lately, and so does Amazon’s checklist of bulletins. Listed here are one of the vital bulletins the corporate made lately on the match:

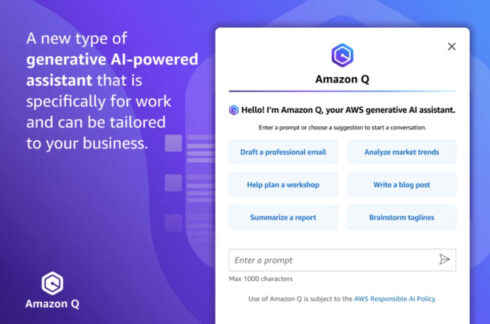

Amazon Q AI assistant introduced

Amazon Q is a brand new generative AI-based assistant this is designed to assist workers whole duties associated with their jobs. As an example, it could actually assist a developer construct, deploy, and perform workloads, or assist a choice heart worker create responses to mention to shoppers.

“Amazon Q may just discover a buyer is contacting your condominium automotive corporate to switch their reservation. It might then generate a reaction it’s worthwhile to ship, detailing the corporate’s alternate insurance policies and information you in the course of the step by step strategy of updating the reservation,” the corporate defined.

It leverages an organization’s knowledge repositories, code bases, and undertaking techniques to higher perceive an organization’s explicit wishes and supply correct knowledge.

RELATED CONTENT: What Amazon announced at AWS re:Invent 2023 Day 1

Amazon Bedrock up to date with guardrails, wisdom bases, and extra

The generative AI platform was once stepped forward with quite a lot of other options. Consumers can now enforce guardrails to verify packages constructed the usage of Amazon Bedrock align with accountable AI ideas.

It could actually additionally now make use of data bases, which is helping it supply custom designed and up-to-date responses.

The corporate additionally added brokers to the platform, which permit the applying to make use of the corporate techniques and knowledge resources to accomplish industry duties that require more than one steps.

And after all, the corporate added new tactics to customise the learning fashions that Amazon Bedrock makes use of. It added strengthen for fine-tuning within the Cohere Command, Meta Llama 2, and Amazon Titan fashions, and strengthen for Anthropic Claude might be to be had quickly.

AWS releases next-gen chips

The corporate launched two households of chips: one for AWS Graviton4 and one for AWS Trainium2. In line with the corporate, those inventions are designed to strengthen a lot of other workloads, reminiscent of system studying coaching and generative AI packages.

In comparison to the present era, the brand new Graviton4 chip supplies as much as 30% stepped forward compute functionality, 50% extra cores, and 75% extra reminiscence bandwidth. The Trainium2 chips supply 4 instances the functionality in coaching in comparison to its first era chips, in keeping with AWS.

AWS S3 Specific Zone One introduced

This can be a new garage elegance in S3 designed for latency-sensitive packages. It supplies 10 instances quicker information get admission to speeds in comparison to the Same old elegance, and decreases prices via as much as 50% as neatly.

“Thousands and thousands of consumers depend on Amazon S3 for the whole lot from low cost archival garage to petabyte-scale information lakes, they usually need to enlarge their use to strengthen their maximum performance-intensive packages the place each millisecond counts,” mentioned James Kirschner, normal supervisor of Amazon S3 at AWS. “Amazon S3 Specific One Zone delivers the quickest information get admission to pace for essentially the most latency-sensitive packages and permits shoppers to make hundreds of thousands of requests in step with minute for his or her extremely accessed datasets, whilst additionally decreasing request and compute prices.”

4 new zero-ETL integrations launched

Amazon Aurora PostgreSQL, Amazon DynamoDB, and Amazon RDS for MySQL now have integrations with Amazon Redshift. In line with the corporate, those new integrations make it simple to attach and analyze information from Amazon Redshift databases.

The corporate additionally introduced an integration between Amazon OpenSearch Provider and DynamoDB. This permits shoppers to do full-text and vector searches on their DynamoDB information.

“Along with having the appropriate software for the activity, shoppers want so as to combine the knowledge this is unfold throughout their organizations to free up extra worth for his or her industry and innovate quicker,” mentioned Dr. Swami Sivasubramanian, vice chairman of Information and Synthetic Intelligence at AWS. “This is why we’re making an investment in a zero-ETL long term, the place information integration is not a tedious, handbook effort, and shoppers can simply get their information the place they want it. The brand new integrations introduced lately transfer shoppers towards this zero-ETL long term, and we’re proceeding to take a position on this imaginative and prescient to make it simple for patrons to combine information from throughout their complete gadget, so they are able to center of attention on riding new insights.”